Choosing a Medical Device Camera Sensor

Camera sensors are key components of video microscopes and endoscopes, fluorescence imagers, X-ray detectors, multiplexed detectors (i.e. detectors that measure/quantify more than one thing at a time), spectrometers, and imaging interferometers. With many options available and many specifications to consider, choosing a camera sensor for a medical device can be a daunting task. This blog series covers technical trade-offs to consider when incorporating a visible or near-infrared range camera sensor into a medical device.

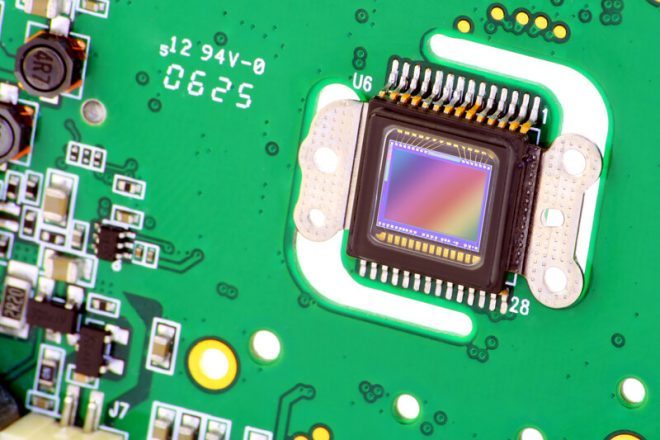

Digital camera sensors typically consist of an array of photosensitive pixels. To capture photographs or videos, a lens is used to form an image on a sensor. This image is recorded by measuring the electric charge generated by the photons of light that impinge upon each of the individual pixels (i.e. photoelectrons)¹ . Here are the most important considerations related to the sensor’s resolution and pixel size.

Medical Device Camera Sensor Background: Signal and Noise

In terms of optical camera sensors, signal refers to the magnitude of a measured quantity, usually an electric charge, that is proportional to an amount of light falling on a given photosensitive site. In cases where the measured quantity is an electric charge, signal is usually measured in counts, referring to the number of electrons the incident light generates.

Noise refers to random fluctuations in a measured signal. Assuming a stable source of light, there are three primary sources of noise in an optical measurement with a typical camera sensor: dark noise, read noise and shot noise.

- Dark noise is the result of a thermal phenomenon whereby electrons are spontaneously generated in a detector even when no light is present. Dark noise can be reduced by lowering a detector’s temperature.

- Read noise arises from random fluctuations in the electronic signal chain that measures charge on a detector, processes it, and converts it to a digital value. Read noise is a property of the sensor and electronic design and, like dark noise, it is present even where there is no light on the sensor.

- Shot noise is noise in a measured light signal resulting from the discrete nature of light (i.e. photons) and the inherently stochastic arrival times of photons at a sensor. This noise is proportional to the square root of the signal counts and, for a given signal magnitude, cannot be reduced by changing the method of measurement. Assuming a single exposure – shot noise can be reduced through processing methods such as averaging multiple exposures.

Two important quantities related to signal and noise are the signal-to-noise ratio (SNR) and the dynamic range:

- As the name suggests, the SNR is the ratio between a measured signal and the noise in that signal (from all sources). In terms of imaging, areas of an image with high SNR will appear clear with little apparent noise, whereas noise is more prominent in areas with a low SNR. Details may be difficult to distinguish in the latter case.

- The dynamic range of a sensor is the ratio between the largest and smallest signals that it can measure. Sensors with a high dynamic range can capture both light and dark areas of an image while preserving contrast detail in between. The maximum theoretical dynamic range of a sensor is usually limited on the low end by the amount of read noise and the high end by the sensor’s full well capacity (i.e. the number of photoelectrons that can be generated before the sensor is saturated).

Medical Device Camera Sensor Resolution

The resolution of a camera sensor refers to its number of pixels, given either as the total number in megapixels (MP) or in terms of the number of horizontal and vertical pixels (e.g. 1920 x 1080). Early consumer digital cameras had relatively low resolutions such as 0.3 MP (640 x 480). The available resolutions of sensors have since increased rapidly, with many smartphones now using 12 MP (4290 x 2800) sensors. Some state-of-the-art cameras have sensor resolutions up to 150 MP [1], and even some high-end smartphones now incorporate 108 MP sensors [2] , though the value of such high pixel counts in a smartphone camera is questionable.

The resolution required depends strongly on the application. In cases where one is simply quantifying an amount of light, such as in certain medical assays, it is often more appropriate to simply use a non-imaging detector with a single photosensitive site. However, in such cases, a camera sensor (perhaps with low resolution) could be used for multiplexed detection. In medical imaging applications, the resolution required depends on what is being imaged as well as how it will be displayed to the user. In general, the captured image resolution should be such that relevant details can be displayed to the end-user with at least as high fidelity as is useful. Greater resolutions beyond that may drive cost and/or computer processing requirements higher and are often of little practical benefit.

When displaying the image to the user, we must consider the size and resolution of the display, along with the distance at which it will be viewed. The angular resolution of a human eye with 20/20 vision is approximately 1 arcminute [3], which means that over a 1° arc the human eye can perceive approximately 60 distinct points (at most distances). Therefore, there is a maximum display resolution for a given viewing distance and display size above which there will be little to no perceptible improvement to the human eye. Assuming digital zoom is not being used in software, images captured and displayed at very high resolutions such as 12 MP are unlikely to be required if they are to be displayed on a 100 mm diagonal screen viewed at a distance of 300 mm (as may be used for instrument navigation, for example), as the smallest details that can be displayed (as determined by the pixel size) would be approximately 4x smaller than that which a typical human can distinguish. On the other hand, an 0.3 MP image is unlikely to be appropriate if the fine details are important and the image will be displayed on a 1.5 m diagonal screen viewed at a distance of 2 m (as may be the case for certain surgical video microscope displays), in which case the pixels in the displayed image will be >3x larger than what the human eye can distinguish and the image will appear pixelated.

Medical Device Camera Sensor Pixel Size

It should be noted that the number of pixels on a sensor, sometimes called the “pixel resolution”, is not the whole story when it comes to resolution. When producing an image, every camera lens has a minimum feature size that it can resolve at a given wavelength of light2. If this feature size is much larger than the size of the pixels, it will be blurred over multiple pixels and the effective resolution will be limited. If the minimum feature size is much smaller than the pixel size, aliasing and other undesirable artifacts can result. Ideally, the lens/detector should be chosen such that the minimum imaged feature size on the detector is approximately twice as large as the pixels. This method maximizes the effective resolution while suppressing artifacts.

Another case in which the relative size of pixels and that of an imaged feature are important is in spectrometer design. Spectrometers measure the number of different colours of light from a source, typically by separating the light into its constituent colours and focusing them onto a line sensor3 (a camera sensor with only a small number of pixels on one axis, and many on the other). The size of the pixels on the sensor informs the size to which each of the constituent colours must be focused to get a desired spectral (colour) resolution. In principle, the focused spot size could be determined first and then a sensor with appropriately size pixels could be chosen, but that approach runs the risk that such a sensor may not be available.

The pixel size also factors into a sensor’s figures of merit. Larger pixels typically have a higher dynamic range than smaller ones and can reach higher signal-to-noise ratios [4]. The reason for this is that larger pixels usually have a greater full well capacity than smaller ones due to their increased photosensitive area, while the read and dark noise do not scale proportionately. By the same token, greater SNRs can be obtained by using the larger capacity of photoelectrons in larger pixels, assuming sufficient signal is available to the sensor to take advantage of this capacity. Noting that shot noise is typically the dominant source of noise except at very low signal levels, and that shot noise scales as the square root of the signal counts, the SNR for a given number of counts N is approximately N⁄(√N= √N). Therefore, greater SNRs are possible given the greater full well capacity of larger pixels. In cases where image clarity or fine details in contrast are of the highest importance, a sensor with large pixels may be preferable.

There are several important caveats to the above. Supposing the same lens and equal sensor size, images taken using sensors with smaller pixels have a higher spatial resolution4. The increased resolution may be more important than dynamic range and SNR, depending on the application. It is also possible to improve the SNR of such images at the cost of pixel resolution by averaging together (binning) adjacent pixels. This can lead to image SNRs as good as those from larger pixels or, in some cases, even better. As a result, smaller pixels/higher resolutions tend to give greater flexibility for a given lens and sensor size.

If the increased dynamic range and SNR of large pixels and a high resolution are both required, a larger sensor (with correspondingly large pixels) can be used. However, compared to the smaller sensors, this method would require the use of a different lens with a longer focal length if the field-of-view of the image is to remain the same. Such a lens would almost always be larger (and often more expensive) than the corresponding lens for a smaller sensor. The larger size may be limited in certain space-constrained designs and may necessitate the use of a smaller lens and smaller sensor with the same pixel resolution. If the resolution of such a smaller sensor is to be maintained (i.e. binning is not an option), other methods such as averaging multiple exposures may be needed to achieve the SNR and/or dynamic range afforded by larger pixels.

Conclusion

This blog discussed how the resolutions and pixel sizes of a medical device camera sensor factor into medical device designs. The key takeaways are:

- Resolution should be as high as necessary to resolve all the relevant details for a given use case, after factoring in how the image will be displayed to the end-user. Required resolutions can be very low, even a single pixel in some non-imaging applications or very high, such as a high-detail image to be viewed from a relatively short distance on a large screen. For imaging applications, an appropriate lens design is required to take full advantage of a sensor’s pixel resolution.

- For a certain sensor size, smaller pixels and therefore higher resolutions, tend to give greater flexibility than larger pixels. Assuming a lens with sufficient resolving power, smaller pixels provide greater spatial resolution. Although larger pixels typically have greater dynamic range and SNR than smaller ones, the increased pixel resolution afforded by smaller pixels may be “traded” for dynamic range/SNR by binning adjacent pixels.

- Dynamic range/SNR and resolution can be simultaneously optimized by using a large sensor having both high pixel resolution and large pixels. Using such a sensor would usually require a larger and often more expensive lens than that used with smaller sensors. Such a lens may not be an option in some space-constrained designs, which would necessitate the use of a smaller lens and sensor with the same pixel resolution if the resolution is to be maintained. In that case, other methods such as averaging multiple exposures may be needed to reach a required SNR and/or dynamic range.

The next blog in this series will discuss incident light irradiance, exposure time and sensor gain and how they affect the signal-to-noise ratio (SNR) and/or dynamic range, as well as considerations related to a sensor’s frame rate.

¹ For colour camera sensors, the way the image is captured is slightly more complex than described here. Colour image capture is covered in a subsequent blog.

² Choosing a lens for a camera is as complex as choosing a camera sensor and the details are outside of the scope of this blog.

³ Usually, the focused feature is an image of a very narrow slit through which the light to be measured passes. This image is focused onto the sensor’s pixels by one or more lenses, possibly with magnification/demagnification.

4 Here we are assuming that the lens has sufficient resolving power to take advantage of the increased pixel resolution.

References

| [1] | R. Lawton, “The 12 Highest Resolution Cameras You Can Buy Today: Ultimate Pro Cameras,” Digital Camera World, 12 June 2020. [Online]. Available: https://www.digitalcameraworld.com/buying-guides/the-10-highest-resolution-cameras-you-can-buy-today. [Accessed 6 November 2020]. |

| [2] | The Smartphone Photographer, “Phones With The Highest Megapixel Camera,” [Online]. Available: https://thesmartphonephotographer.com/phones-with-highest-megapixels/. [Accessed 16 November 2020]. |

| [3] | Y. Myron and J. S. Duker, Ophthalmology, 3rd ed., Elsevier, 2009, p. 54. |

| [4] | R. N. Clark, “Digital Cameras: Does Pixel Size Matter?,” ClarkVision.com, 2015. [Online]. Available: https://clarkvision.com/articles/does.pixel.size.matter/. [Accessed 8 April 2021]. |

Ryan Field is an Optical Engineer at StarFish Medical. Ryan holds a PhD in Physics from the University of Toronto. As a post doctoral fellow, he worked on the development of high-power picosecond infrared laser systems for surgical applications as well as a spectrometer from home materials.

© Can Stock Photo / vilax