Recent Advances in Biophotonics

TL;DR

- Two emerging biophotonics imaging techniques could expand how clinicians visualize tissue and diagnose disease.

- A new anterior eye imaging method uses backscattered scleral light to produce low-cost, non-contact corneal images.

- A computational holography technique reconstructs images through highly scattering tissue using randomized speckle illumination and AI optimization.

- Turning these technologies into medical devices will require solving challenges in cost, robustness, regulatory approval, and clinical market fit.

I was recently looking through the OPTICA trade journal Optics and Photonics News – specifically its summary of “Optics in 2025[1].” A few highlights were of particular interest to me in terms of their potential applicability to future medical devices. I thought I’d share an overview of two of these highlights with you.

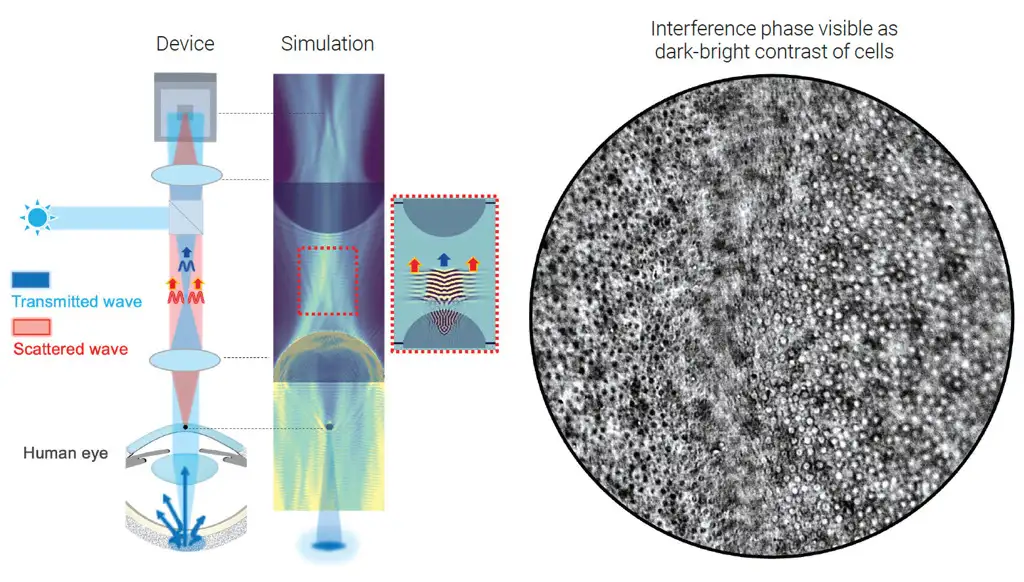

Imaging the Anterior Segment of the Eye

The first featured item is a new imaging modality for the anterior segment of the eye. Since the eye is transparent, it naturally lends itself to optical imaging. Samer Alhaddad and his colleagues at Institut Langevin and Quinze-Vingts National Ophthalmology Hospital in Paris have found a way to image the objects in the cornea through the interference they induce in light coming from within the eye[2]. To do this, they use light backscattered from the posterior sclera as a light source to illuminate the anterior segment in transmission.

In brief, a collimated and polarized near infrared (NIR) light source is shined onto the eye through an objective lens: the cornea is at the front focal plane of this objective lens. The incoming light is pre-focused at the back focal plane of this objective and is transmitted onto the eye as a collimated beam. The eye’s anterior segment (cornea and crystalline lens) then brings this incoming light to a focused spot on the posterior sclera. The backscattered light from the sclera is re-collimated by the anterior segment, but localized structures in the cornea imprint a phase difference on the light as it passes back through, while simultaneously introducing divergence into the otherwise-collimated beam.

The back focal point of the objective lens is imaged onto a camera by a tube lens. Collimated light from the posterior sclera is brought back to a focus at this back focal point and thus is recollimated by the tube lens onto the sensor of a consumer-grade camera. On the other hand, light scattered (diffracted) by objects in the cornea lie at the front focus of the objective lens. The objective lens collimates this part of the light, which is then refocused onto the camera sensor by the tube lens, phase shift and all.

Thus, this technique records the localized phase shifts introduced by corneal structures using the recollimated light from the retinal sclera as a phase reference. Features at different depths may be imaged simply by refocusing the system. Image contrast and depth of focus may be played off by changing the focal length of the objective lens – changing the size of the illuminated retinal sclera spot and thus the effective numerical aperture of the imaging system.

An advantage to using a polarized illumination source is that multiple scattering in the sclera partially depolarizes the retroreflected beam, so that polarization optics can separate the depolarized backscatter from the strong – but still polarized – specular reflections off the cornea and crystalline lens.

Using a benchtop prototype of this technology, the researchers were able to image the corneal epithelium of several human subjects, as well as their sub-basal nerve plexus.

Other methods exist to image anterior-segment anatomy, such as confocal microscopy or anterior-segment optical coherence tomography (AS-OCT). However, the former requires contact with the eye and has limited field of view, whereas the cost of AS-OCT systems can be prohibitive for some clinics. This current work offers a new, low-cost, non-contact ocular imaging modality – and may be applicable to screen out high-risk patients for procedures such as LASIK, or to assess the impact of corneal infections.

Unscrambled Light, Disordered Layers

The ocular imaging technique described above capitalizes on the fact that the eye is transparent to most wavelengths of light – a property that makes it ideal for optical imaging of its physiology. However, it also highlights the major complication for using optical imaging to uncover the secrets of other parts of the anatomy. Even if human tissue doesn’t absorb light, it often is highly scattering and scrambles the phase of the optical radiation that passes through it. This random scattering is like static on a radio signal and scrambles the information carried by light travelling to or from the interior of the body. This limits the effective depth for optical imaging[3].

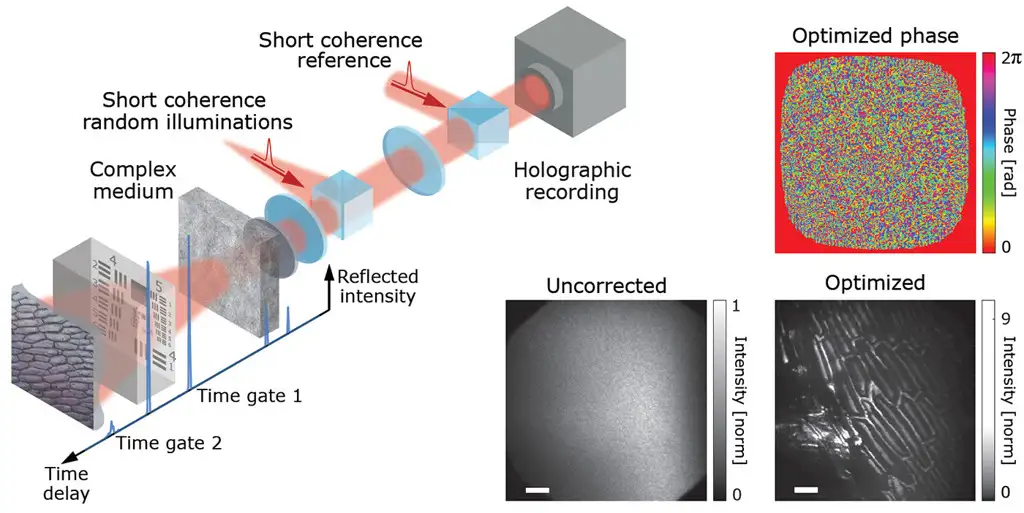

Omri Haim and his coworkers at the Institute of Applied Physics at Hebrew University have uncovered a new approach for unscrambling the light passing through one or more disordered layers[4]. Ironically, they do this by illuminating an obscured object with pre-disordered light – in their case, coming from a spinning optical diffuser illuminated by a laser source. The diffuser creates a series of speckle patterns, in which the phases from different parts of the ground-glass diffuser have a wide, randomly distributed set of distinct optical phases. On its way to the object of interest (in this proof of principle, either a USAF resolution target or a section of onion skin), the phase from each orientation of the spinning diffuser is further scrambled by one or several layers of random scatterers (e.g. strongly scattering tissue or – in this proof of principle – further ground-glass diffusers). Finally, the light scatters off the object(s) to be imaged and back through the “tissue” sample before being recombined holographically with a copy of the randomly scattered incoming light. The resulting holograph is recorded on a high-sensitivity scientific (CMOS) camera.

N = 25 to 100 such holograms are recorded on the camera, corresponding to N different spinning-diffuser speckle patterns. For each recording, the phase distribution of the light field is completely but differently randomized by the rotating diffuser and tissue scattering. However, the same information from the hidden object (its angular spectrum) is contained in each of these random permutations.

Rather than trying to undo the phase distortions optically, Haim and colleagues harness the power of parallel computation and automatic differentiation – in this case using the inherently vectorized PyTorch library. They simulate the optical effect of a phase-correction spatial modulator and optimize the image contrast of the field back-propagated through the “virtual phase mask” over the ensemble of N recorded holograms. The success of this approach depends strongly on the optimization target. However, optimizing to maximize image entropy appears to yield robust and efficient convergence. Remarkably, this approach can recover an image scrambled over more than 190,000 speckle modes using as few as 25 distinct illumination random phase patterns! Further, the exact depth of the object to be imaged does not need to be known ahead of time but, rather, comes out of the optimization iterations.

Using the same approach, the researchers can image a single object through multiple layers of thin scatterers. In addition, the same computational approach may be used to reconstruct the “image” carried by a multi-core bundle of optical fibers – promising new options for lensless endoscopic imaging. Finally, by using a pulsed source for illumination – rather than a continuous one – the technique can image multiple objects, each of which is at a different depth beneath the scattering layer.

The researchers have not yet demonstrated the applicability of this this technique to an object contained in a continuous volume of scattering material, such as skin or breast tissue. But this is an area they are actively pursuing and the approach seems highly promising.

The two technologies described above are exciting extensions to existing work and may offer new optical insights into the body. But the journey from technology to product is a long one, with multiple hurdles along the way. From a product technology readiness perspective, the ocular imaging work seems further along. The requisite sub-components are readily available and the component cost seems lower (LEDs, microscope objectives, polarization optics, and commercial-quality sensors). A major technical challenge would be ensuring robust performance of the polarization optics for high-aperture or large-angle illumination, while maintaining an acceptable cost of goods sold (COGS) cost. On the other hand, the technical lift for the disordered-imaging technique seems considerably higher. For broadest applicability, the researchers need to demonstrate imaging through volumetric scattering media, rather than simply thin layers. To image at multiple depths, a bright time-gated source will be required: for their current demonstration, the workers used a femtosecond pulsed laser – but this is also a complex technology. Furthermore, the technique requires sensitive, low-noise cameras for recording the holographic record of the light. High performance usually entails high cost. Finally, the approach requires interferometric reconstruction of two spatially separated light paths. Although the same challenge has been met in OCT systems, ensuring coherence and phase matching between two interferometer arms is challenging to perform in a robust, productizable manner.

Assuming the technical hurdles can be surmounted and converted into a robust and user-friendly form factor, the device itself would still not be a product, per se. A product is a technology with a well-identified market and a strategy for obtaining and retaining acceptable market share. The raw cost of materials needs to be supported by the remuneration scheme for treatments (e.g. Medicare billing codes for products entering the American market), while still enabling assembly, overhead, quality assurance, and marketing costs. These latter crucial elements typically inflate the “raw bill of materials” cost by a factor of 4X to even 8X. As a medical device, the product also needs to clear regulatory hurdles. More end-use applications entail more complex proof of safety and effectiveness, driving up clinical trial complexity, duration, and cost. Incremental technology improvements may have a lower burden of proof but may also have more difficulty securing market share over incumbents.

Moving Forward

All these factors shape the path of development and commercialization, from concept/technology through prototyping and production. Navigating this path requires money. Investors will be looking for a clear timeline to eventual profitability, or at least a return on their investment. They’ll request development milestones along the way– either to help them monitor their investment, or as an opportunity to recoup some of their investment before the end game.

The road can be challenging for designers and investors who seek to turn an exciting new imaging modality into a compelling new imaging product. But it is these new products that drive advanced medical insights and open new treatment pathways. I’ll look forward to seeing how these two new technologies evolve and become integrated into tomorrow’s medical device products.

Brian King is Principal Optical Systems Engineer at StarFish Medical. Previously Manager of Optical Engineering and Systems Engineering at Cymer Semiconductor/ASML, Brian was an Assistant Professor at McMaster University. Brian holds a B.Sc in Mathematical Physics from SFU, and an M.S. and Ph.D. in Physics from the University of Colorado at Boulder. His research centered on implementing quantum information processing with trapped, laser cooled atoms – often single atoms confined in radiofrequency ion traps operating at ultrahigh vacuum.

Images:

References

[1] Optics and Photonics News 36, 12 (Dec. 2025), pp. 26-57.

[2] Mazlin, Boccara, Alhaddad, Optics and Photonics News 36, 12 (Dec. 2025), pg. 34; Alhaddad et al., Nat. Commun. 16, 7838 (2025).

[3] Other techniques, such as pulse-flow oximetry or optical perfusion measurements, encode information from deep-scattered tissues spectroscopically, rather than through direct measurement.

[4] Haim, Boger-Lombard, Katz, Optics and Photonics News 36, 12 (Dec. 2025), pg. 45; Haim, Boger-Lombard, Katz, Nat. Photon. 19, 44 (2025).

Related Resources

Understanding gram positive vs negative bacteria is essential when studying sterility, microbiology, and antibiotic effectiveness. While many people think the difference is only about staining, the reality is much deeper. In this Bio Break episode, Nick and Nigel walk through the structural differences between these bacteria and explain how those differences impact how they behave and how they are treated.

Nick and Nigel explore the science behind hand sanitizer formulations. They discuss how alcohol interacts with bacterial cells, why water improves its effectiveness, and what the additional ingredients in sanitizer actually do.

Scott Phillips, CEO of StarFish Medical, sits down with Peter van der Velden, Managing General Partner at Lumira Ventures, to explore the strategic thinking behind major MedTech transactions and investments.

Computer vision technologies such as convolutional neural networks and vision transformers are transforming how AI analyzes medical images, each offering distinct advantages depending on the application and computing environment.